AI Hallucination in Court Case Sparks Legal and Ethical Concerns

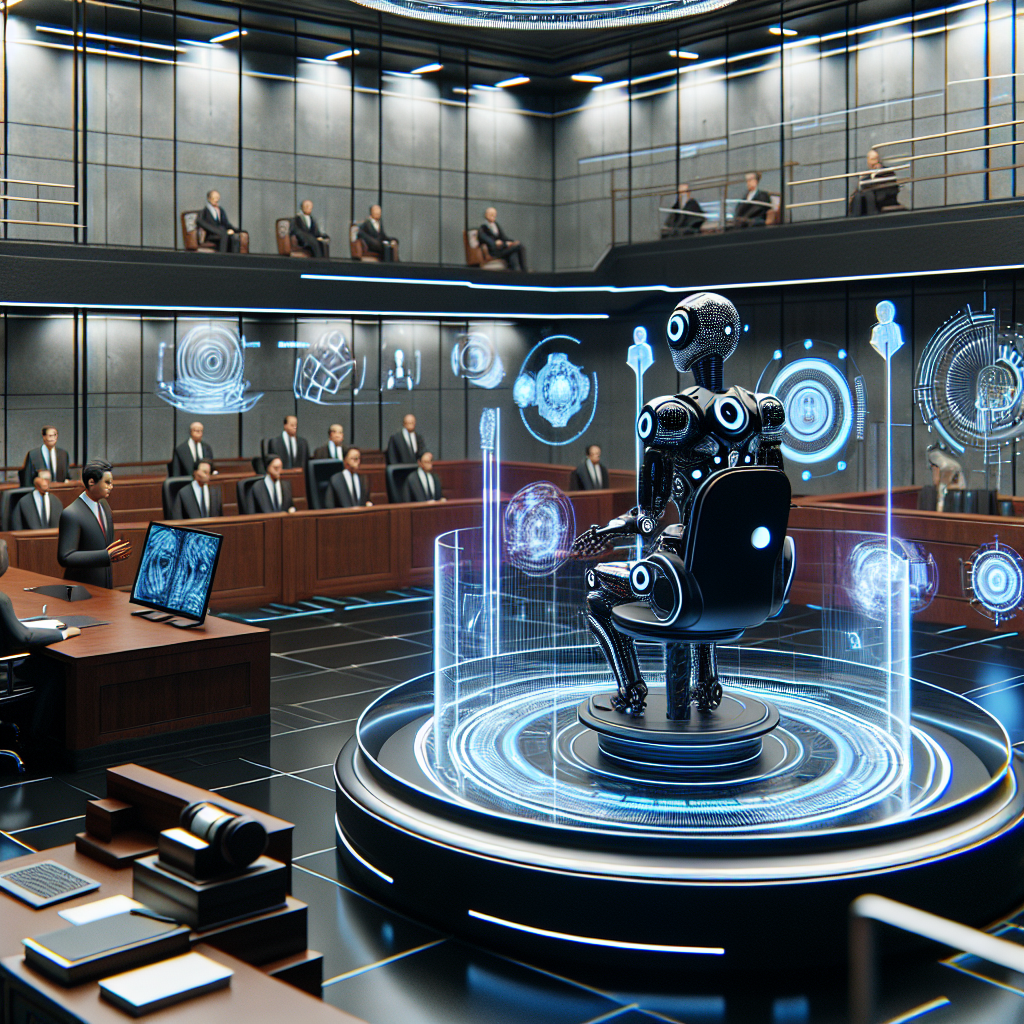

The Rise of AI in Legal Proceedings

With artificial intelligence now being integrated into nearly every industry, it’s no surprise that the legal system has begun embracing AI tools to streamline research, document drafting, and case assessment. However, a recent court case involving a MyPillow attorney has underscored the risks of over-reliance on these systems — especially when they “hallucinate” or generate fictional legal citations.

In July 2025, the case surrounding MyPillow CEO Mike Lindell drew widespread attention not because of the political spotlight frequently cast on the company, but due to a troubling development: an AI tool used by one of the attorneys hallucinated citations — referencing legal cases that simply didn’t exist. This startling event has reignited concerns about the ethical use of AI in the courtroom.

What Happened: AI-Generated Legal Citations Go Awry

The controversy developed when an attorney, in defending Lindell against a defamation lawsuit filed by voting machine company Dominion Voting Systems, submitted court filings that included fabricated legal case citations. These references were generated by an AI tool — likely one among popular large language models (LLMs) used for legal research — and had not been verified before submission.

The presiding judge was reportedly stunned to discover that the cases cited couldn’t be found in any official legal database. Upon further investigation, it became clear that the AI had “hallucinated” the cases, fabricating them based on predictive text algorithms instead of actual legal precedent.

Legal Consequences and Fines

Due to the inclusion of these fake citations, the legal team representing Lindell faced swift and serious repercussions. Judge sanctions followed, with fines issued to the responsible attorneys for negligence. The court emphasized that lawyers have a judicial obligation to ensure their filings are accurate and credible — regardless of whether AI tools are used to assist.

This incident serves as a legal milestone by establishing that:

- AI cannot be treated as a substitute for human legal expertise.

- Attorneys are still held fully accountable for inaccuracies in filings, even those generated by technology.

- The ethical rules governing legal practice must evolve to incorporate new AI challenges.

Understanding AI Hallucinations

One of the most pressing issues with language-based artificial intelligence is the phenomenon of “hallucination.” Unlike traditional databases or legal research tools, LLMs generate answers based on probability. If prompted to produce legal citations, the AI can stitch together plausible-sounding case names, judges, courts, and years — none of which are necessarily real.

These hallucinations occur because the AI isn’t designed to verify facts. It’s engineered to produce “most likely” responses, not accurate ones. For legal professionals, this highlights a fundamental gap in using AI tools:

AI generates content. It does not authenticate it.

That difference is subtle but significant when dealing with law, where every citation must be real and verifiable.

Why Lawyers Must Verify AI Outputs

While AI tools can be incredibly helpful for drafting documents, summarizing arguments, or organizing complex case details, they must always be used with discretion. Lawyers need to verify:

- Each cited case and its details against verified legal databases like LexisNexis or Westlaw.

- The jurisdiction and relevance of each citation.

- That the AI-generated content aligns with the current legal framework and precedents.

Failure to do this isn’t just a practical oversight — it can lead to legal malpractice, regulatory sanctions, and damage to professional credibility.

Ethical Implications of AI in Law

Beyond the courtroom drama and monetary penalties, the larger concern is the ethical framework governing AI in the legal space. This incident has prompted serious questions:

- Who is responsible for AI-generated errors: the developer or the user?

- Should AI output come with disclaimers in professional settings like courtrooms?

- What policies should law firms implement before allowing AI-driven tools into sensitive workflows?

Legal institutions and bar associations are now being urged to issue clearer guidance on the use of AI. As jurisdictions work to adapt, many expect new ethical standards and continuing legal education (CLE) requirements aimed at training professionals on AI limitations.

Future of AI in the Legal System

Despite incidents like the MyPillow case, AI is not leaving the legal profession any time soon. In fact, as AI tools become more refined and regulators establish clearer guardrails, they will likely play an increasingly integral role in:

- Discovery processes

- Drafting briefs

- Researching legal precedent

- Predictive analytics for case outcomes

But the lesson from this case is clear: AI must be treated as a supplementary tool, not a replacement for human discernment.

Building Trustworthy AI Legal Tools

To prevent future incidents, AI developers and legal professionals must collaborate on building more reliable, transparent AI platforms tailored specifically to the courtroom. These platforms should:

- Integrate with verifiable legal databases

- Include transparency logs of how citations were generated

- Offer users the ability to fact-check citations in real time

Only then can AI safely and ethically serve the legal system.

Conclusion: A Wake-Up Call for the Legal Industry

The MyPillow court filing debacle is more than just an embarrassing courtroom blunder — it’s a wake-up call to the legal community regarding the power and pitfalls of artificial intelligence. As we enter a new era where machines assist with complex tasks, accountability, ethics, and human oversight remain crucial.

Attorneys must understand that AI doesn’t absolve responsibility — it heightens the need for professional vigilance.

As legal professionals adapt to an AI-integrated world, those who combine innovation with integrity will redefine the future of justice. But let this case be a cautionary tale: technology without training or ethical restraint is a liability, not an asset.