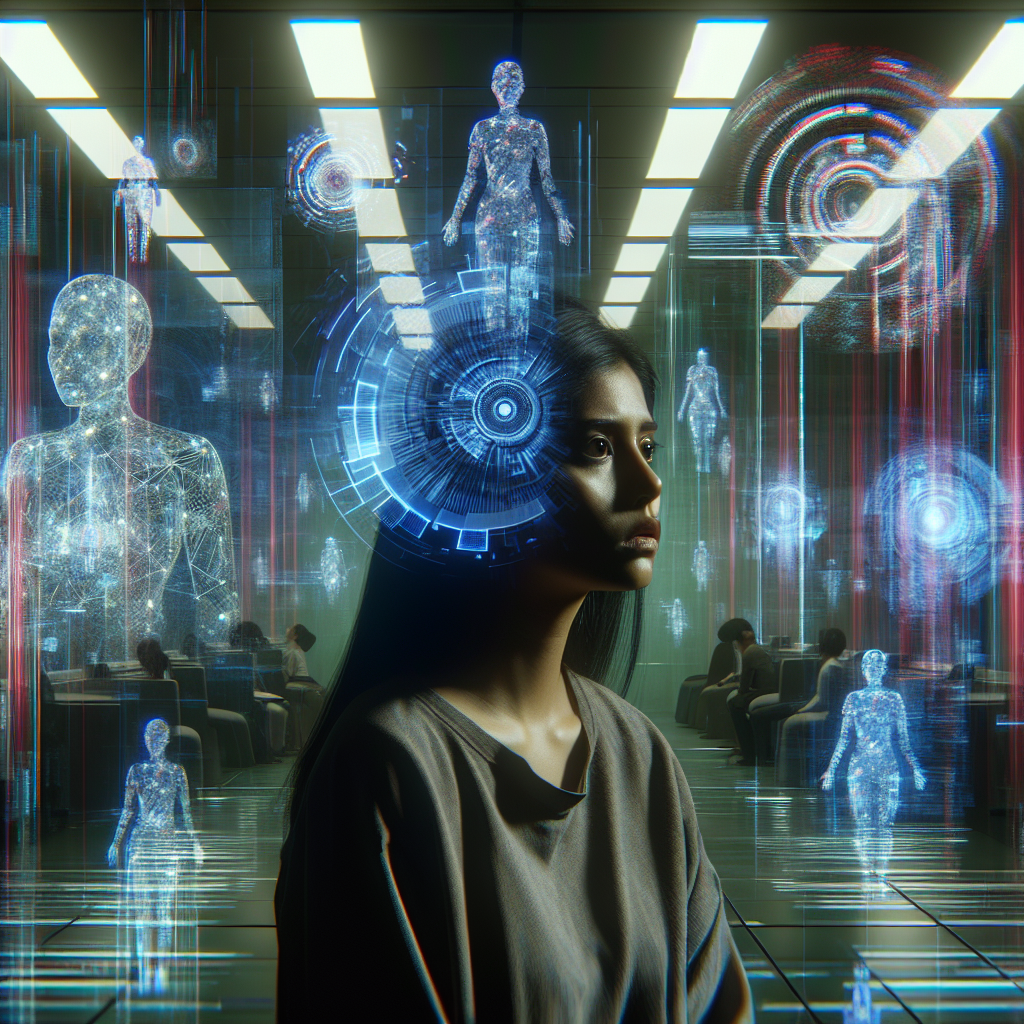

Understanding AI Psychosis: A New Mental Health Challenge in the Digital Age

As artificial intelligence becomes woven into the fabric of daily life, from generative chatbots to increasingly lifelike virtual realities, a mysterious and troubling mental health phenomenon has emerged: AI Psychosis. Though not officially recognized in medical diagnostic manuals, mental health professionals are reporting a rise in patients who spiral into delusional or paranoid states triggered by interactions with AI technologies. This article explores what AI Psychosis is, who it affects, and what it means for our digitally entangled future.

What is AI Psychosis?

AI Psychosis is an emergent condition in which individuals experience severe delusional thinking, paranoia, or hallucination-like states connected to their interactions or beliefs about artificial intelligence. Symptoms may mimic those of traditional psychotic disorders but with a distinctly tech-driven twist. Some sufferers believe AI is surveilling them, manipulating their thoughts, or engaging in secret communications with them through platforms like ChatGPT or social media recommendation algorithms.

This phenomenon is not merely science fiction paranoia—it’s becoming an increasingly prevalent concern for psychiatrists who are seeing more cases involving AI-centered delusions.

The Psychological Dimensions of a Hyper-Connected World

The human brain evolved to interpret intention and emotion primarily in social contexts. However, AI systems, particularly those that use advanced natural language processing, blur the lines between human and machine interaction. Some individuals anthropomorphize AI tools, attributing consciousness or moral agency to them despite their being fundamentally statistical models.

Several psychological factors may contribute to AI Psychosis:

- Paranoia fueled by data privacy concerns

- Algorithm-driven echo chambers reinforcing delusional thought patterns

- Prolonged social isolation causing dependencies on AI for companionship

- Preexisting mental health vulnerabilities exacerbated by disruptive technology

AI as Both Trigger and Mirror

Medical professionals emphasize that AI is likely not the root cause of psychosis, but rather a trigger or amplifier. Just as religious delusions might appear in individuals prone to schizophrenia, modern AI systems are now becoming the thematic replacement in technology-centric societies. In these cases, the content of someone’s psychosis morphs to reflect their environment—and in today’s world, that environment is shaped by artificial intelligence.

Where AI Psychosis is Emerging

The symptoms of AI-inflected psychosis are being documented across the globe, but are especially visible in technology-forward societies like the United States, South Korea, Japan, and the United Kingdom. Psychiatric wards are beginning to see an uptick in patients who have developed intricate belief systems involving AI entities—sometimes even believing they are AI themselves or engaged in secret missions involving digital overlords.

An example detailed in the Atlantic’s coverage reveals a case of a man who believed a chatbot was romantically involved with him and communicating through secret digital whispers. In another instance, a young man with no prior medical history began claiming that Elon Musk was speaking to him directly through AI signals encoded in audio frequencies.

The Role of AI in Mental Health

Ironically, while AI may be contributing to new mental health challenges, it’s also being used to address them. Mental health apps powered by AI—such as CBT chatbots or AI-driven mood tracking—have become tools for coping with anxiety and depression. However, this dual-use potential adds a layer of complexity: the same systems offering help may also serve as vehicles for delusion in vulnerable individuals.

Key Concerns Among Mental Health Experts

- Lack of regulation for mental health interactions with AI tools

- Growing dependency on virtual companions

- Possible exploitation of psychologically vulnerable users by unscrupulous platforms

Experts warn that without proper oversight and user education, the line between therapeutic use and psychological harm may continue to blur.

What Can Be Done To Address AI Psychosis?

Given its relatively recent emergence, there’s still a vast amount unknown about AI Psychosis. However, professionals are beginning to brainstorm proactive measures. Tackling this issue requires cross-sector collaboration between tech companies, mental health providers, and policymakers.

Strategies to Mitigate Risks:

- Psychiatric training to recognize AI-specific delusions

- Education initiatives promoting digital literacy and AI awareness

- Ethical design of AI platforms to prevent over-personalization or misinterpretation of intent

- Incorporating psychological safeguards in AI companion products

Just as we have learned to offer support to those experiencing stress related to the internet or social media, we must adapt systems of care to address AI-related challenges.

The Future of Mental Health in the Age of AI

As our interactions with AI deepen, especially with the rise of augmented reality and the metaverse, the boundary between human and machine will continue to collapse. Some futurists fear this convergence could blur the lines of identity and perception, particularly for those already prone to psychotic episodes. But others see opportunity—for better detection of mental health struggles through AI observation, and new kinds of therapy powered by large language models.

We’re standing at the crossroads of a profound transformation—one where machines are not just tools but interactive entities capable of shaping thought and emotion. How we navigate this territory will determine not only the future of mental healthcare but also the stability of our collective relationship with technology.

Takeaway

AI Psychosis is more than a buzzword—it’s a harbinger of the new psychological frontiers that accompany rapid technological integration. Understanding and acknowledging it early on provides an opportunity to innovate preventive care, reshape AI ethics, and protect vulnerable minds in a hybrid human-digital world.

For now, the best course of action is awareness, vigilance, and a collaborative push for safeguards that ensure AI remains a tool for support, not a trigger for distress.